The Next Iteration of RAG ?

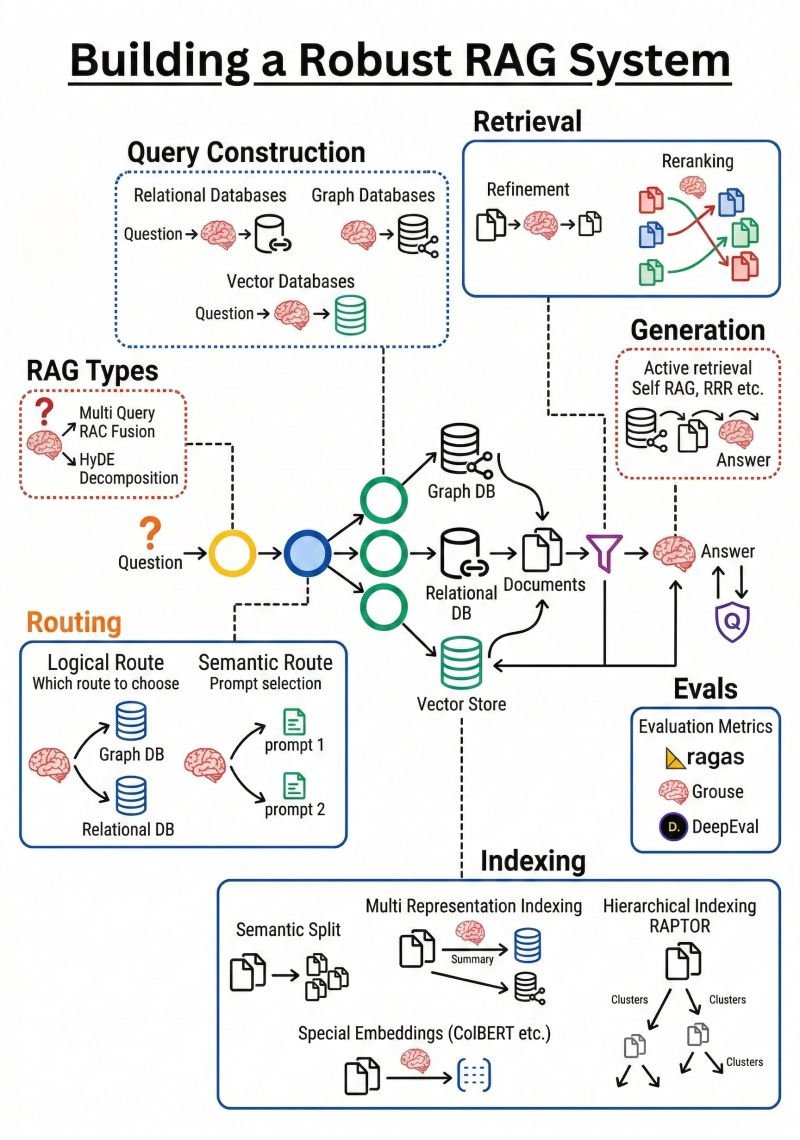

Every aspect of LLM-based solution development remains in flux, with architectures evolving rapidly toward paradigms of greater power and business relevance. Recently I have encountered compelling arguments for what next-generation RAG should look like. A post by Brij Kishore Pandey — something along the lines of "Stop doing RAG like it's 2023" — resonated strongly, as I recognized that several advances that might be helpful if incorporated into my own work.

———————————————————-

The boundary between RAG and agentic approaches is itself becoming harder to define as agentic methods grow in strength, reliability, and ease of use. That said, the core principle remains constant: assembling the right context is central to effective LLM-based solutions, regardless of the architectural pattern used.

———————————————————-

Before mapping out Cognosa 2.0, I intend to study the graphic from Kis' post carefully. I am not, however, convinced that a Swiss army knife architecture will ever represent the optimal solution for every use case. Having already implemented enhanced retrieval types with tunable parameters, flexible filtering, and customized semantic chunking, I question whether every element of the proposed architecture is necessary — or even beneficial — for my intended use cases. More study is warranted. That said, my experience with agents, Claude Code, and the composability of skills and sub-agents gives me good reason to engage seriously with this framework and extract what is most applicable. Balancing the utility of generalizability and the power of finely honed improvements for narrow or speceific use cases is a common trade-off for product managers; perhaps investment is best defined in the context of my next customer!